Which Khadas SBC do you use?

VIM3 A311D

Which system do you use? Android, Ubuntu, OOWOW or others?

Ubuntu 20.04

Please describe your issue below:

After training the model I perform the modification in head.py file and

I was running the following script to convert yolov8n model to .nb. This object detection model has 5 classes.

Script is

./convert --model-name yolov8n --platform onnx --model best.onnx --mean-values ‘0 0 0 0.00392156’ --quantized-dtype asymmetric_affine --source-files ./data_1/dataset/dataset0.txt --kboard VIM3 --print-level 0

Post a console log of your issue below:

Can you please guide on this.

@Louis-Cheng-Liu

@numbqq

Link for Model

https://drive.google.com/file/d/1aR1AuPbXcFtEEVjgsUENKDARQaSAdW3w/view?usp=sharing

Hello @Chetan_Deshmukh ,

I can not convert your pt model into onnx model.

And, here is the same problem. You can try it.

Khadas VIM 3 NPU issues - Technical Support - Khadas Community

Although the problem has been solved, i still do not know what caused it.

If it still break down, please provide your onnx model and your whole train code.

@Louis-Cheng-Liu

Please find .onnx model and image with below link to convert into .nb

[model&image.zip - Google Drive)

Eagerly waiting for reply @Louis-Cheng-Liu

Hello!

I have the same problem, but before everything was okay

Can you please provide you torch/ultralytics versions?

Good news!

I tried torch 1.9.1 and it worked for me. Try it yourself, maybe it’ll help)

thank you @Agent_kapo for help…but still with torch 1.9.1 ultralytics 8.1.10 python 3.9 and with command

./convert --model-name yolov9n --platform onnx --model ./best.onnx --input-size-list ‘3,640,640’ --inputs images --outputs output0 --mean-values ‘128 128 128 0.0078125’ --quantized-dtype asymmetric_affine --source-files ./data_1/dataset/dataset0.txt --kboard VIM3 --print-level 0

I am still getting the same error.

@Agent_kapo

can you guide me on changes to do in head.py as I have 5 classes?

Thank you in advance

Also, I use ultralytics v8.0.228 that can be the reason

Hello @Chetan_Deshmukh ,

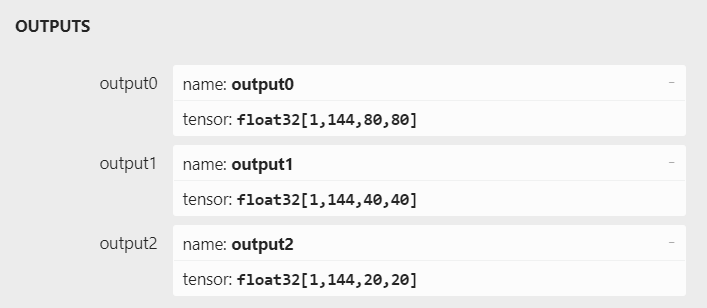

Your forward_export has not affected. If you pip install ultralytics package, you need to add forward_export in package. The right model output like this.

Then, Try convert nb

If still fail, modify onnx opset version and try convert nb again.

import onnx

model = onnx.load("./yolov8n.onnx")

model.opset_import[0].version = 12

onnx.save(model, "./yolov8n_1.onnx")

Sorry for reply late, last week we took Spring holiday. If you still convert failure, please provide your right output onnx model. I will feedback our engineer.

@Louis-Cheng-Liu

I converted .onnx to .nb but getting error as follow as I have 5 classes.

sharing .onnx , .nb and .py file to reproduce the error. Also, please check head.py mode the changes made are correct or not.

|—+ KSNN Version: v1.3 ±–|

Start init neural network …

Done.

inference : 0.05578303337097168

Traceback (most recent call last):

File “yolov8n-cap.py”, line 234, in

input0_data = input0_data.reshape(SPAN, LISTSIZE, GRID0, GRID0)

ValueError: cannot reshape array of size 75600 into shape (1,69,20,20)

https://drive.google.com/file/d/1LzObY-90ldS-amoZBAx95wWT6DAxzQv1/view?usp=sharing

Hello @Chetan_Deshmukh ,

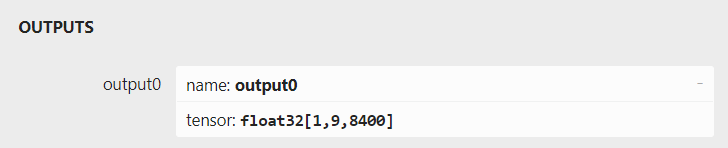

Your forward_export has not affected pt to onnx.

Your model.

Have you use pip install ultralytics package? If you do that, you should add forward_export in ultralytics package but not in git-cloned ultralytics repo. You can use Netron tool to open your onnx model and check if you convert right.

Right output.

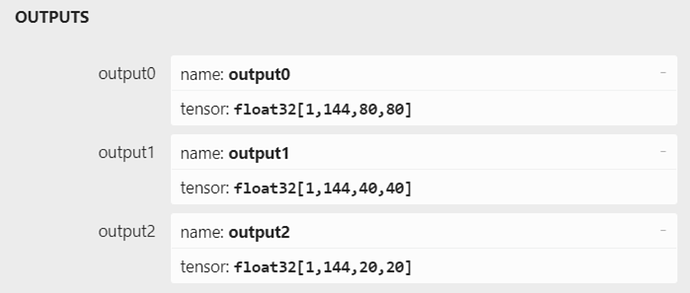

@Louis-Cheng-Liu after implementing the steps you mentioned I am able to get .onnx with the mentioned OUTPUTS. but not able to convert .onnx to .nb using the following command

./convert --model-name yolov8n --platform onnx --model ./best.onnx --mean-values ‘0 0 0 0.00392156’ --quantized-dtype asymmetric_affine --source-files /home/mantra_rnd/Desktop/aml_npu_sdk/acuity-toolkit/python/data_1/dataset/dataset0.txt --kboard VIM3 --print-level 0

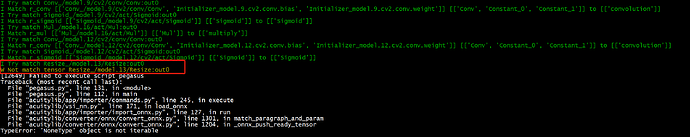

error:

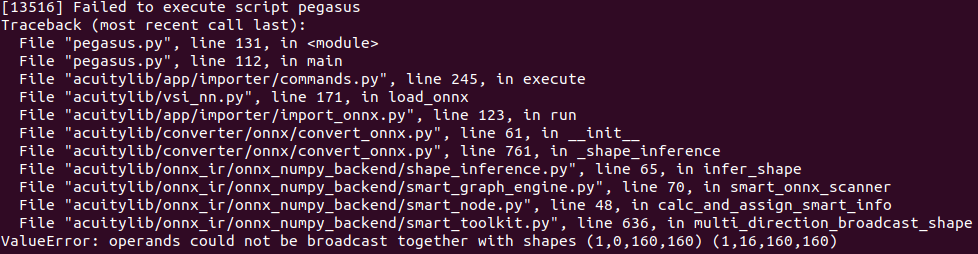

[57034] Failed to execute script pegasus

Traceback (most recent call last):

File “pegasus.py”, line 131, in

File “pegasus.py”, line 112, in main

File “acuitylib/app/importer/commands.py”, line 245, in execute

File “acuitylib/vsi_nn.py”, line 171, in load_onnx

File “acuitylib/app/importer/import_onnx.py”, line 127, in run

File “acuitylib/converter/onnx/convert_onnx.py”, line 1301, in match_paragraph_and_param

File “acuitylib/converter/onnx/convert_onnx.py”, line 1204, in _onnx_push_ready_tensor

TypeError: ‘NoneType’ object is not iterable

model link:

https://drive.google.com/file/d/1dTuADaa_CM7ivh39C1HkypPSv0GtZqkl/view?usp=sharing

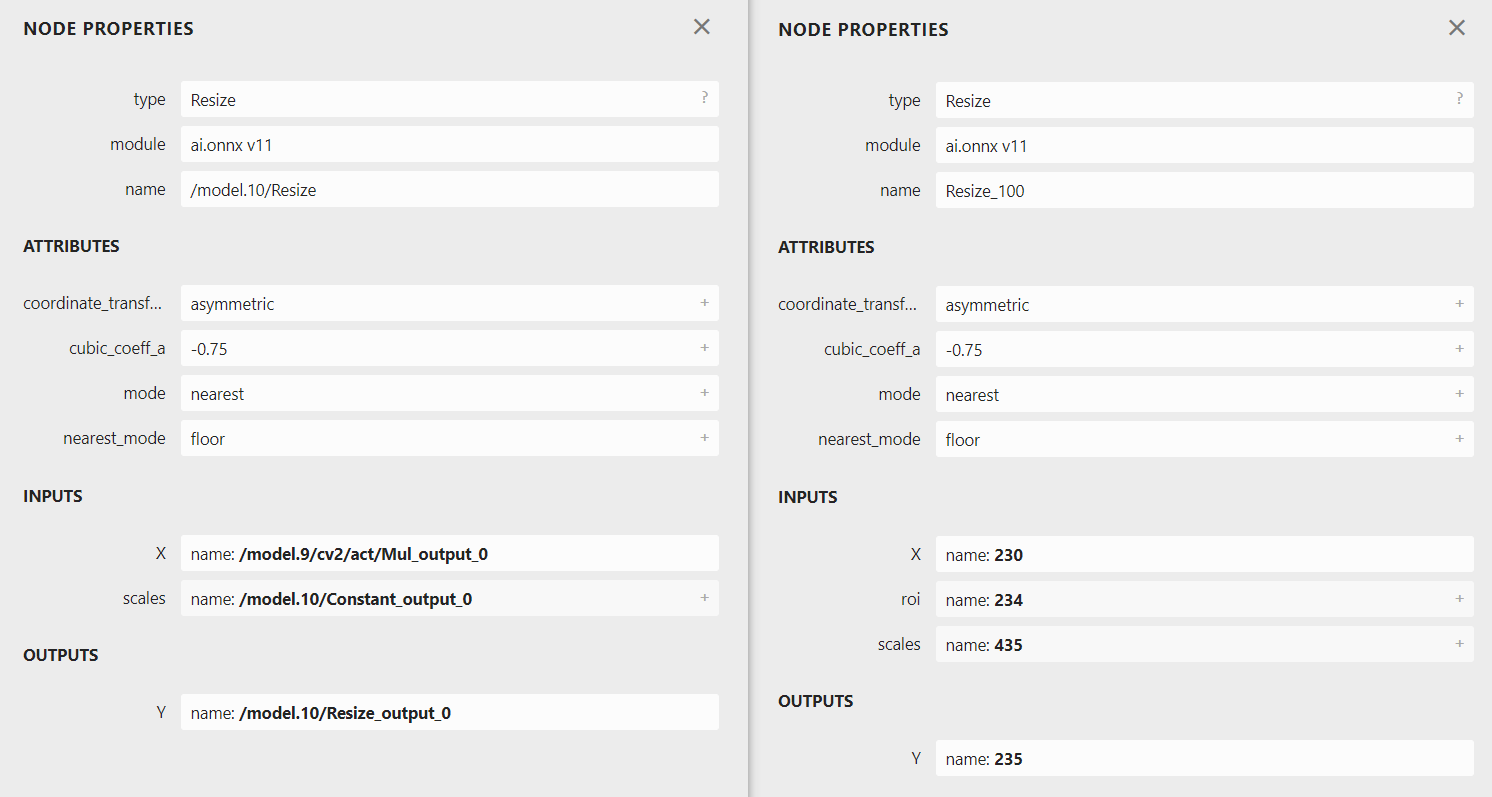

Hello @Chetan_Deshmukh ,

Have you done other change? For example, change ultralytics or PyTorch version.

The error is about the Resize.

But your model before can convert. The Resize difference between after and before.

You can refer my version.

torch==1.10.1

ultralytics==8.0.86

Thank you @Louis-Cheng-Liu for your wonderful support as I found the success in running the model on NPU with good inference.

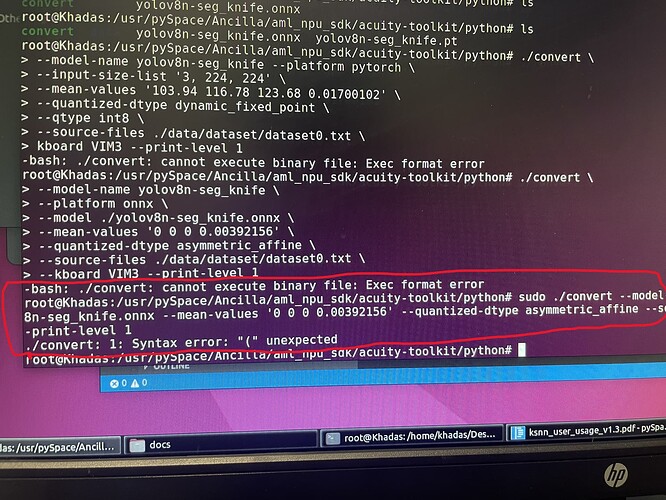

tuning into adding more errors im coming across as well:

I am converting yolov8 segmentation model and I keep receiving this error. Is something wrong with the code on the executable???

I have checked my input a dozen times now and I’m inputting this correctly. help