转换的3个脚本如下:

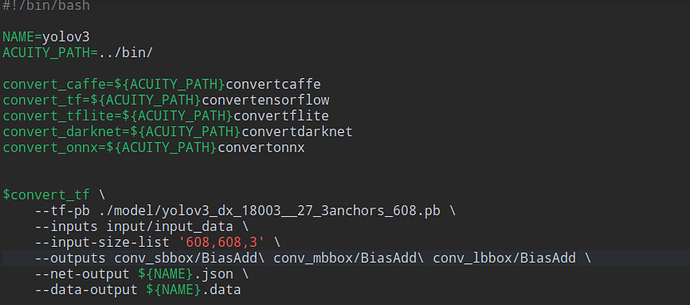

1、0_import_model

NAME=yolov3

ACUITY_PATH=…/bin/

convert_caffe=${ACUITY_PATH}convertcaffe

convert_tf=${ACUITY_PATH}convertensorflow

convert_tflite=${ACUITY_PATH}convertflite

convert_darknet=${ACUITY_PATH}convertdarknet

convert_onnx=${ACUITY_PATH}convertonnx

$convert_tf

–tf-pb ./model/yolov3_dx_18003__27_3anchors_608.pb

–inputs input/input_data

–input-size-list ‘608,608,3’

–outputs conv_sbbox/BiasAdd\ conv_mbbox/BiasAdd\ conv_lbbox/BiasAdd

–net-output ${NAME}.json

–data-output ${NAME}.data

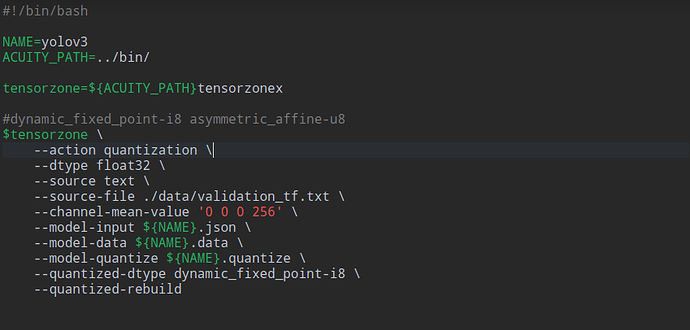

2、1_quantize_model

NAME=yolov3

ACUITY_PATH=…/bin/

tensorzone=${ACUITY_PATH}tensorzonex

#dynamic_fixed_point-i8 asymmetric_affine-u8

$tensorzone

–action quantization

–dtype float32

–source text

–source-file ./data/validation_tf.txt

–channel-mean-value ‘0 0 0 255.0’

–model-input ${NAME}.json

–model-data ${NAME}.data

–model-quantize ${NAME}.quantize

–quantized-dtype dynamic_fixed_point-i8

–quantized-rebuild

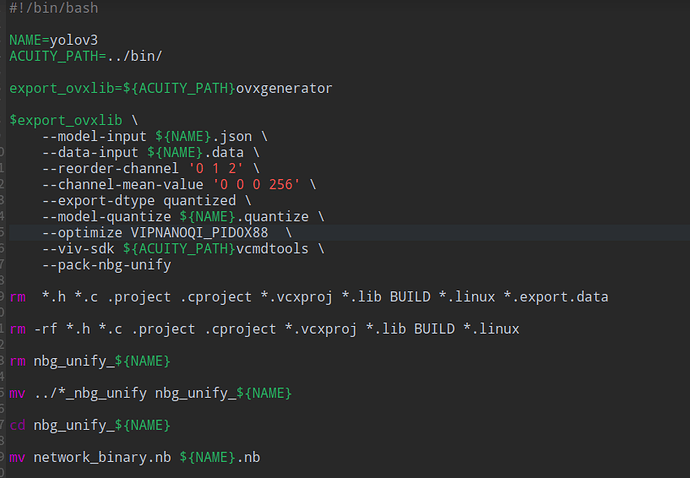

3、2_export_case_code

NAME=yolov3

ACUITY_PATH=…/bin/

export_ovxlib=${ACUITY_PATH}ovxgenerator

$export_ovxlib

–model-input ${NAME}.json

–data-input ${NAME}.data

–reorder-channel ‘2 1 0’

–channel-mean-value ‘0 0 0 255.0’

–export-dtype quantized

–model-quantize ${NAME}.quantize

–optimize VIPNANOQI_PID0X88

–viv-sdk ${ACUITY_PATH}vcmdtools

–pack-nbg-unify

首先第一个问题,./data/validation.txt 中./path/img.jpg, 813。这个813是什么意思?比如我们模型输入是608×608×3,这里813要改成608吗?

第二个问题,–reorder-channel ‘2 1 0’ ,按照我们上面模型输入608×608×3,这里是0 1 2 ,还是2 1 0,我试了两个好像最后运行时候都会报错,报错信息如下:

model.width:3

model.height:608

model.channel:608

E detect_api:[Inputsize not match! net VS img is height:608vs608, width:channel:608vs3]

感谢感谢!@Frank