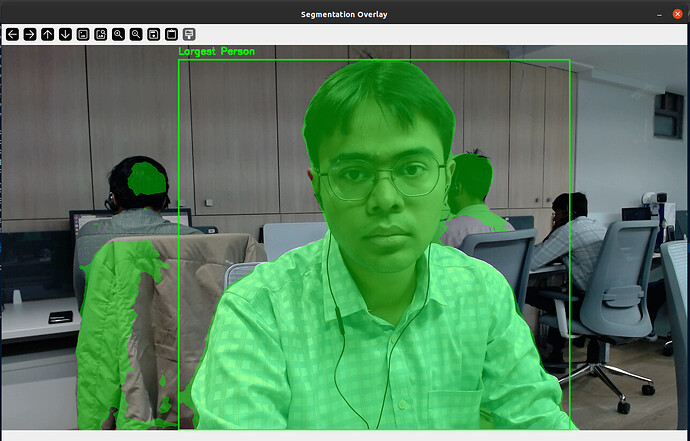

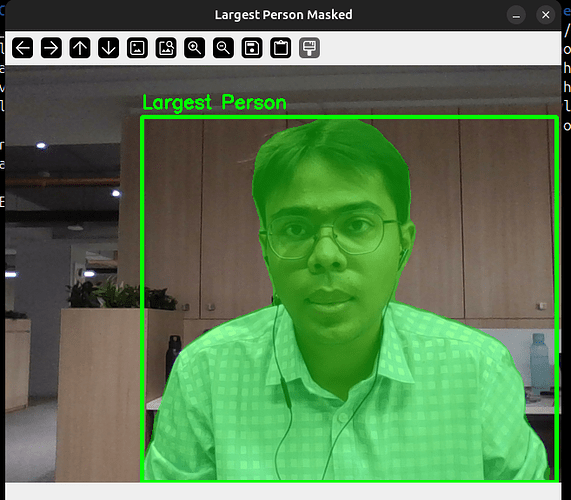

I am trying to take inference and make mask over a camera feed for the person with largest bounding box using YOLOV8N-seg.pt model on x86 device the script runs perfectly but when running the nb/so NPU model converted model on VIM_3 device there is extra garbage with the mask on the person. guide this issue and how to fix this extra garbage coming while running NPU model. @numbqq @Louis-Cheng-Liu

@MrArthor try verifying the accuracy of the output by using a 8-bit quantized model on your host computer before converting the model into the NPU format, use some static image to compare the outputs, this maybe the result of some quantization artifacts

@Electr1 i am using Ultralytic’s pre-trained model of yolov8n-seg and in case of static image as well there were leaks in mask. and the leakage is major when two persons are standing in front of each other and the person under observation is the person with bigger bounding box but the result mask the other person as well without making their bouding box

@MrArthor perhaps that’s the characteristic of the model, it has nothing to the conversion tool’s quantization artifacts then, maybe you need to modify the accuracy threshold or some other parameters

Cheers

@Electr1 Ok i’ll try some other model of yolov8 - seg and try to get segment on it or just play with threashold and other parameters.