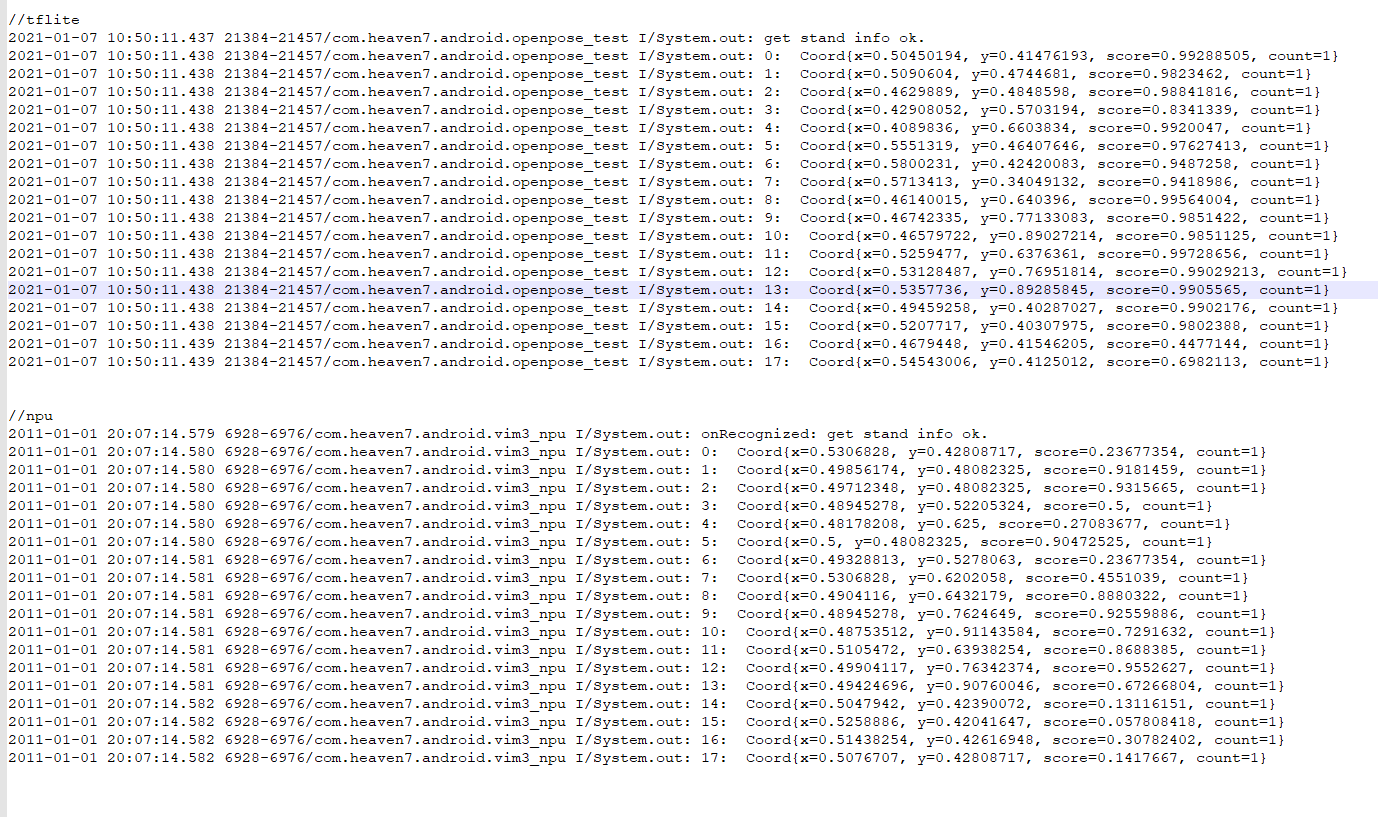

项目是跑openpose的(android 平台)。 我用的google的posenet. 基于tensorflowlite

目前已经跑通。测试发现性能很差。

用npu之前我用的tensorflowlite-api: vim3 上测试耗时不到300ms.

但是用了npu 之后耗时470ms左右. 而且470是执行vnn_ProcessGraph花的时间…

vim_npu: Start run graph [1] times...

vim_npu: Run the 1 time: 464.00ms or 464475.00us

vim_npu: vxProcessGraph execution time:

vim_npu: Total 464.00ms or 464593.00us

vim_npu: Average 464.59ms or 464593.00us

下面是转化case代码后的ProcessGraph 关键代码。求教怎么回事?

static vsi_status vnn_ProcessGraph

(

vsi_nn_graph_t *graph

)

{

vsi_status status = VSI_FAILURE;

int32_t i,loop;

char *loop_s;

uint64_t tmsStart, tmsEnd, sigStart, sigEnd;

float msVal, usVal;

status = VSI_FAILURE;

loop = 1; /* default loop time is 1 */

loop_s = getenv("VNN_LOOP_TIME");

if(loop_s)

{

loop = atoi(loop_s);

}

/* Run graph */

tmsStart = get_perf_count();

printf("Start run graph [%d] times...\n", loop);

for(i = 0; i < loop; i++)

{

sigStart = get_perf_count();

status = vsi_nn_RunGraph( graph );

if(status != VSI_SUCCESS)

{

printf("Run graph the %d time fail\n", i);

}

TEST_CHECK_STATUS( status, final );

sigEnd = get_perf_count();

msVal = (sigEnd - sigStart)/1000000;

usVal = (sigEnd - sigStart)/1000;

printf("Run the %u time: %.2fms or %.2fus\n", (i + 1), msVal, usVal);

}

tmsEnd = get_perf_count();

msVal = (tmsEnd - tmsStart)/1000000;

usVal = (tmsEnd - tmsStart)/1000;

printf("vxProcessGraph execution time:\n");

printf("Total %.2fms or %.2fus\n", msVal, usVal);

printf("Average %.2fms or %.2fus\n", ((float)usVal)/1000/loop, ((float)usVal)/loop);

final:

return status;

}