@Frank @numbqq @Louis-Cheng-Liu

hello I’m on the cardas board I want to download tensor flow help I use vim3 pro Ubuntu version is 20.04

I have referred this link

but it doesn’t work

Package not downloading

Can’t even install

@Frank @numbqq @Louis-Cheng-Liu

hello I’m on the cardas board I want to download tensor flow help I use vim3 pro Ubuntu version is 20.04

I have referred this link

but it doesn’t work

Package not downloading

Can’t even install

Hello, @rayeleigh ,

Those link are invalid. Please install them by pip install xxx.

Tips: This version is too low.

However, VIM3 does not need tensorflow. Tensorflow can not interface NPU on VIM3.

Emmm, why do you want to install tensorflow in VIM3.

@Louis-Cheng-Liu My sign language model is a tensor flow model If you code in Python, you need to import it, but you need to install TensorFlow there.

@Louis-Cheng-Liu

import tkinter as tk

from tkinter import *

import pygame

import cv2

import time

import tensorflow as tf

import numpy as np

window = tk.Tk()

#tot_text를 전역변수로 선언

global count

global tot_text

#tot_text 초기화

tot_text = []

count = 0

window.geometry(‘1280x720’)

window.title(“Hangul”)

label = tk.Label(window)

label.place(x=0, y=0)

frame1 = Frame(window,width=1280,height=720)

frame2 = Frame(window,width=1280,height=720)

frame3 = Frame(window,width=1280,height=720)

frame4 = Frame(window,width=1280,height=720)

frame5 = Frame(window,width=1280,height=720)

frame6 = Frame(window,width=1280,height=720)

frame1.grid(row=0,column=0)

frame1.propagate(0)

frame2.grid(row=0,column=0, )

frame2.propagate(0)

frame3.grid(row=0,column=0, )

frame3.propagate(0)

frame4.grid(row=0,column=0, )

frame4.propagate(0)

frame6.grid(row=0,column=0, )

frame6.propagate(0)

#ptrdicted_class_label 초기화

predicted_class_label = “”

def openFrame(frame):

frame.tkraise()

def signlanguage():

global predicted_class_label

global class_labels

global count

global tot_text

global encText

count = count + 1

#모델적용

model_path = ‘eng_sign_lang_cnn_epochs50_27.h5’

model = tf.keras.models.load_model(model_path)

#class 지정

class_labels = [‘A’, ‘B’, ‘C’, ‘D’, ‘E’, ‘F’, ‘G’, ‘H’, ‘I’, ‘K’, ‘L’, ‘M’, ‘N’,

‘O’, ‘P’, ‘Q’, ‘R’, ‘S’, ‘T’, ‘U’, ‘V’, ‘W’, ‘X’, ‘Y’]

cap = cv2.VideoCapture(0) # 카메라 인덱스 (일반적으로 0)

while True:

ret, frame = cap.read()

if not ret:

break

blurred_frame = cv2.GaussianBlur(frame, (5, 5), 0)

def preprocess(image):

resized_image = cv2.resize(image, (28, 28))

gray_image = cv2.cvtColor(resized_image, cv2.COLOR_BGR2GRAY)

normalized_image = gray_image / 255.0

reshaped_image = np.reshape(normalized_image, (1, 28, 28, 1))

return reshaped_image

processed_frame = preprocess(blurred_frame)

predictions = model.predict(processed_frame)

predicted_class_index = np.argmax(predictions[0])

predicted_class_label = class_labels[predicted_class_index]

text = f"Predicted Class: {predicted_class_label}"

cv2.putText(frame, text, (10, 30), cv2.FONT_HERSHEY_SIMPLEX, 1, (0, 255, 0), 2)

print(predicted_class_label)

cv2.imshow('Frame', frame)

#z를 누르면 종료

if cv2.waitKey(1) & 0xFF == ord('z'):

break

cap.release()

cv2.destroyAllWindows()

#tot_text는 문자열(메소드), 이 문자열에 차례로 추가

tot_text.append(predicted_class_label)

#문자열(메소드)를 하나의 텍스트로 변환

encText = ''.join(tot_text)

print(encText)

print(count)

def Output():

global encText

btn_2.config(text=encText)

def translation():

#번역 API 실행

import os

import sys

import urllib.request

import urllib.parse

global encText

encText = ''.join(tot_text)

print(encText)

client_id = "SGnoCu207DwIN6PKhSwo"

client_secret = "lRUBh6ujXI"

encText = urllib.parse.quote(encText)

#입력언어 영어, 출력언어 한국어 변환

data = "source=en&target=ko&text=" + encText

url = "https://openapi.naver.com/v1/papago/n2mt"

request = urllib.request.Request(url)

request.add_header("X-Naver-Client-Id", client_id)

request.add_header("X-Naver-Client-Secret", client_secret)

response = urllib.request.urlopen(request, data=data.encode("utf-8"))

rescode = response.getcode()

if rescode == 200:

response_body = response.read()

#번역된 텍스트 출력

print(response_body.decode('utf-8'))

else:

print("Error Code:" + rescode)

btn_3.config(text=response_body.decode('utf-8'))

def Reset():

global tot_text

global encText

tot_text = []

encText = ‘’

def spacing():

global tot_text

global encText

tot_text.append(" ") # 띄어쓰기를 문자열 리스트에 추가

encText = ‘’.join(tot_text) # 문자열 리스트를 하나의 문자열로 변환

btn = Button(frame4, text=‘수어인식’, command=signlanguage)

btn_2 = Button(frame4, text=‘현재 텍스트’, command=Output)

btn_3 = Button(frame4, text=‘번역’, command=translation)

btn_4 = Button(frame4, text=‘초기화’, command=Reset)

btn_5 = Button(frame4, text=‘띄어쓰기’, command=spacing)

btn.config(width=15, height=15)

btn_2.config(width=15, height=15)

btn_3.config(width=70, height=15)

btn_4.config(width=15, height=15)

btn_5.config(width=15, height=15)

btn.place(x=260, y=1)

btn_2.place(x=260, y=130)

btn_3.place(x=540, y=260)

btn_4.place(x=260, y=260)

btn_5.place(x=260, y=390)

label1 = Label(frame6,text= “수어 번역기”, font = (“궁서체”,50))

label2 = Label(frame4,text= predicted_class_label, font = (“궁서체”,50))

btnToFrame1 = Button(frame4,text=“자음”,padx=10,pady=10,command=lambda:[openFrame(frame1)])

btnToFrame2 = Button(frame1,text=“모음”,padx=10,pady=20,command=lambda:[openFrame(frame2)])

btnToFrame4 = Button(frame6,text=“음성 인식”,padx=24,pady=10,command=lambda:[openFraZme(frame6)])

btnToFrame6 = Button(frame6,text=“수어 번역”,padx=24,pady=10,command=lambda:[openFrame(frame4)])

btnToFrame1.pack()

btnToFrame2.pack()

label1.pack(padx=0,pady=0)

btnToFrame4.pack()

btnToFrame6.pack()

window.mainloop()

pygame.quit()

@Louis-Cheng-Liu

this is my code Could there be something I’ve misunderstood?

I used the tensor flow model right away, but if I convert the model to use npu, I don’t need to use tensor flow?

Hello @rayeleigh ,

Converting model does not have to be done on VIM3. It alse can be done on PC.

I understood Can you tell me if this is correct? If you convert a tensor flow model to a python ksnn model Is it okay to import ksnn api and use it? But the conversion has to be done on the computer!

Hello @rayeleigh ,

Yes, it is right. I have not tried to convert model on VIM3 but i think it is okay. Good luck for you!

@Louis-Cheng-Liu I even entered the command to convert the TensorFlow .h5 extension to ksnn in the notebook.

but no file is created

Should I use the pb extension unconditionally?

Hello @rayeleigh ,

Did it report error? .h5 is the model of keras. You should modify platform from tensorflow to keras.

Uneasy…

…?

Is this command wrong?

.convert

–model-name my_model

–platform tensorflow

–model /home/khadas/Desktop

–input-size-list ‘128,128,1’

–inputs input

–outputs output

–mean-values ‘234’

–quantized-dtype asymmetric_affine

–source-files /home/khadas/Downloads/trainingData

–kboard VIM3

–print-level 1

Hello @rayeleigh ,

.h5 model is keras model. Therefore, tensorflow should be modified to keras. Make sure your model is saved by model.save().

The mean-values is not right. This parameter has four number. For you above code, image is divided by 255.0. So, this line should be ‘0 0 0 0.00392156’. (0.00392156 = 1 / 255)

The source-files need a txt file which write the image path but not a path.

There are some demos we make. Every example has a README about converting and running. You can refer it .

KSNN Usage [Khadas Docs]

What parameter values of the model should I look for?? How should I put the command?? what do i have to change now?

@Louis-Cheng-Liu

Hello @rayeleigh ,

You should know your model platform and the normalization before model get input. If you want to use tensorflow model, you also need to know input name, input size and output name.

What’s more. .pb is the model saved by tensorflow. .h5 is the model saved by keras. Please choose the right platform.

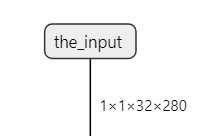

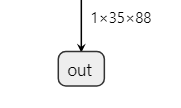

You should give the right name to the inputs and outputs. They are decided by your model. For example, this is my model input and output.

So, i should write

--inputs the_input --outputs out --input-size-list '1, 32, 280'

Normalization is the operation when you train before give input to your model.

The way of command use like as follows.

./convert --model-name inceptionv3 --platform tensorflow --model /home/yan/yan/Yan/models-zoo/tensorflow/inception/inception_v3_2016_08_28_frozen.pb --input-size-list '299,299,3' --inputs input --outputs InceptionV3/Predictions/Reshape_1 --mean-values '128 128 128 0.0078125' --quantized-dtype asymmetric_affine --source-files ./data/dataset/dataset0.txt --kboard VIM3 --print-level 1

Sry, i do not know the information of your model, so i have no idea about the parameter should be modify what. I suggest you to refer our demos. In these demos, every platform has an example and in each folder there is a README about converting command.

khadas/ksnn: Khadas Software Neural Network (github.com)

Clone

git clone --recursive https://github.com/khadas/ksnn.git

@Louis-Cheng-Liu

I understand your story…

But there’s a problem…

What is costom >adam?

Check information about my model through pictures

Another thing I’m curious about is that the model data is not a single picture, so it’s organized in folders.

what should i do??

and my command is this

./convert

–model-name my_model

–platform keras

–model /home/gun/다운로드/my_model.h5

–input-size-list ‘128,128,1’

–inputs conv2d_4

–outputs dense_10

–mean-values ‘0 0 0 0.00392156’

–quantized-dtype asymmetric_affine

–source-files ./home/gun/다운로드/data.txt

–kboard VIM3 --print-level 1

@Louis-Cheng-Liu

i want right answer

…

Hello @rayeleigh ,

You save optimizer in your model file. Optimizer is only used at training. You should save model without it.

Here is my solution. Reload the model without optimizer and save again.

from tensorflow.keras.models import *

basemodel = load_model("model/my_model.h5", compile=False)

basemodel.save("model/my_model_1.h5")

And here is the names of your model input and output. But converting keras model is not necessary.

I use the resave model converting successful. I use aml_npu_sdk to convert. This convert model also can be used in ksnn. So i think it is also okay in ksnn convert.