excellent! thanks a lot

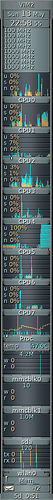

Yup have @Khadas any updates on this? This is gnucash starting on my VIM2 - allocated to CPU5 running 100% CPU but at 1000MHz!

@numbqq Definitely, this issue must be looked into. Right now, since the kernel allocates threads to the slow cores instead of the fast ones, it turns out that performance of VIM2 is worst than VIM1, and we paid twice as much for it.

Well. When I run sysbench on my latest build, I got a different result.

root@Khadas:~# uname -a

Linux Khadas 3.14.29 #8 SMP PREEMPT Thu May 24 18:25:14 CST 2018 aarch64 aarch64 aarch64 GNU/Linux

root@Khadas:~# cat /etc/lsb-release

DISTRIB_ID=Ubuntu

DISTRIB_RELEASE=16.04

DISTRIB_CODENAME=xenial

DISTRIB_DESCRIPTION="Ubuntu 16.04.4 LTS"

root@Khadas:~# sysbench --version

sysbench 0.4.12

root@Khadas:~#

Test script:

root@Khadas:~# cat test.sh

#!/bin/bash

echo performance >/sys/devices/system/cpu/cpu0/cpufreq/scaling_governor

echo performance >/sys/devices/system/cpu/cpu4/cpufreq/scaling_governor

for o in 1 4 8 ; do

for i in $(cat /sys/devices/system/cpu/cpu0/cpufreq/scaling_available_frequencies) ; do

echo $i >/sys/devices/system/cpu/cpu0/cpufreq/scaling_max_freq

echo -e "$o cores, $(( $i / 1000)) MHz: \c"

sysbench --test=cpu --cpu-max-prime=20000 run --num-threads=$o 2>&1 | grep 'execution time'

done

done

sysbench --test=cpu --cpu-max-prime=20000 run --num-threads=8 2>&1 | egrep "percentile|min:|max:|avg:"

Result:

root@Khadas:~# ./test.sh

1 cores, 100 MHz: execution time (avg/stddev): 374.5317/0.00

1 cores, 250 MHz: execution time (avg/stddev): 147.9295/0.00

1 cores, 500 MHz: execution time (avg/stddev): 73.3578/0.00

1 cores, 667 MHz: execution time (avg/stddev): 55.1783/0.00

1 cores, 1000 MHz: execution time (avg/stddev): 36.6099/0.00

1 cores, 1200 MHz: execution time (avg/stddev): 30.5101/0.00

1 cores, 1512 MHz: execution time (avg/stddev): 25.8465/0.00

4 cores, 100 MHz: execution time (avg/stddev): 93.0469/0.01

4 cores, 250 MHz: execution time (avg/stddev): 36.8459/0.01

4 cores, 500 MHz: execution time (avg/stddev): 18.3660/0.00

4 cores, 667 MHz: execution time (avg/stddev): 13.7663/0.00

4 cores, 1000 MHz: execution time (avg/stddev): 9.1601/0.00

4 cores, 1200 MHz: execution time (avg/stddev): 7.6329/0.00

4 cores, 1512 MHz: execution time (avg/stddev): 6.4740/0.00

8 cores, 100 MHz: execution time (avg/stddev): 46.8414/0.01

8 cores, 250 MHz: execution time (avg/stddev): 26.5825/0.01

8 cores, 500 MHz: execution time (avg/stddev): 15.3673/0.01

8 cores, 667 MHz: execution time (avg/stddev): 12.0014/0.01

8 cores, 1000 MHz: execution time (avg/stddev): 8.3543/0.00

8 cores, 1200 MHz: execution time (avg/stddev): 7.0645/0.01

8 cores, 1512 MHz: execution time (avg/stddev): 6.0570/0.01

min: 2.58ms

avg: 4.86ms

max: 136.68ms

approx. 95 percentile: 36.82ms

root@Khadas:~#

mainline kernel 4.17 is due out soon, with significant driver additions for GXM/S912 support, and even a kvim2 dtb, so it may be worth trying with it…

Do you see the problem? Sysbench runs entirely inside the CPU (caches). Running on 4 cores the benchmark must finish in 1/4 the time compared to 1 core. With 8 cores there has to be again a reducation to the half compared to 4 cores.

Now look at the numbers above and especially result variation min/avg/max (or did heavy throttling occur?)

Cpufreq and scheduling ist totally broken on S912 at least with 4.9 kernel and current boot blobs.

Has a defect anyway - you are only swapping frequency of cores 0-3. Cores 4-7 are running 1000MHz throughout. I haven’t bothered to try sort this for multiple reasons, not least as on early parts of script (1 core) all the early runs on my machine are running on core 4 at 1000Mhz rather than something from core 0-3 at the set value.

Other reasons are I really don’t see much point running old versions of software unless we are regression testing something! Obviously sysbench moving the goalposts between v0.4.12 and v1.0.11 doesn’t help. But as per g4b42 I would prefer to go from 4.9.40 to 4.17 to try progress than to regress to 3.14.29

I am

root@VIM2:~# uname -a Linux VIM2.dukla.net 4.9.40 #2 SMP PREEMPT Wed Sep 20 10:03:20 CST 2017 aarch64 aarch64 aarch64 GNU/Linux root@VIM2:~# cat /etc/lsb-release DISTRIB_ID=Ubuntu DISTRIB_RELEASE=18.04 DISTRIB_CODENAME=bionic DISTRIB_DESCRIPTION="Ubuntu 18.04 LTS" root@VIM2:~# sysbench --version sysbench 1.0.11

@numbqq @Gouwa Any news on this? It’s been almost two months and, honestly, you don’t seem to care much about this issue. I think that your flagship performing worst than Vim1 in every use case except those using 8 threads, is something to care about.

The truth is I am getting very disappointed on how you are dealing with this. Lots of posts in other threads about new accessories, and no attention to an important design flaw in the base product that has been clearly demonstrated. I don’t want to jump into conclusions, but I really expected more.

Hi huantxo,

We have asked Amlogic about this issue, but they said they don’t limit the CPU frequency in BL blobs, so we haven’t find a way to resolve this now, sorry… But we will talk to Amlogic when we have enough evidence.

In what case VIM2 performs worse than VIM1? Can you kindly provide some scene?

Thanks.

Well, does this thread not provide “enough evidence”? I assume you have read all the posts, particularly those made by @tkaiser. What further evidence do you need? Those numbers are absolutely clear.

Again, in this thread there are several examples. The closest one is here, just five posts above, where the user shows that VIM2 is binding single-thread processes to slow cores, therefore running them at 1.0 Ghz (and VIM1 runs at 1.5 Ghz, or so it claims). There are other posts in this thread, and in other threads in this forum, like for example this one.

Of course, the fact that S912 performs worse than S905 in certain use cases, had already been pointed out almost two years ago, and I thought you were already aware of it.

The fact that you act as if you were unaware of things that have been so clearly exposed and demonstrated in this same forum, only confirms my feeling that you are not caring for this issue. As I said, it really disappoints me, I had put hopes in Khadas that it would be a company different to those that only aim to sell TV boxes to consumers who don’t know what they are buying. I hope that Khadas cares about the things that matter to makers, developers and advanced users. We have done our best to give you solid data in this forum, even some community members are investigating how to improve the blobs. I hope the story ends well, and we don’t get disappointed with the hopes we put in this company.

Mmm, I notice another month has passed.

It is fun to see my same use-case on a proper big.LITTLE implementation: gnucash allocated to a big core at max MHZ throughout means 55s load time as opposed to 128s on VIM2.

A lot here to digest, so using multiple cores the Cpu clock will be 1.2 max, and also this means that using the hardware encryption has a lot to do with this as well.

@numbqq It’s been two years, and still the issue persists. CPU scheduler assigns the tasks randomly to the small cores sometimes, therefore making the board much slower than it should be.

There are two possible solutions to this:

- Ask Amlogic for some boot binary that equals all the eight core frequencies to 1.5 Ghz

- Improve the CPU scheduling, so demanding tasks are always assigned to the fast cores, as in all big.LITTLE implementations.

Would you please look into this? That would be very kind of you, and would prove that Khadas is a company that cares for their customers.

I stumbled accross this thread when looking for something else, and the issue of making workloads prefer the higher-clocked cores over lower-clocked is easily solved, see:

@numbqq if you can run some tests and confirm back to me, I will send this upstream to the kernel.

Confirmed to work!

Here are some numbers, using the small but handy tool ‘mhz’ (GitHub - wtarreau/mhz: CPU frequency measurement utility), and also 7-zip single-core benchmark.

Before your patch:

root@kvim2:~/src/mhz# taskset -c 1 ./mhz 1 100000

count=645643 us50=22849 us250=114250 diff=91401 cpu_MHz=1412.770root@kvim2:~/src/mhz# taskset -c 5 ./mhz 1 100000

count=413212 us50=20725 us250=103619 diff=82894 cpu_MHz=996.965root@kvim2:~/src/mhz# ./mhz 1 100000

count=413212 us50=20725 us250=103700 diff=82975 cpu_MHz=995.992

root@kvim2:~/src/mhz# ./mhz 1 100000

count=413212 us50=20726 us250=103645 diff=82919 cpu_MHz=996.664

root@kvim2:~/src/mhz# ./mhz 1 100000

count=413212 us50=20713 us250=103631 diff=82918 cpu_MHz=996.676

root@kvim2:~/src/mhz# ./mhz 1 100000

root@kvim2:~/src/mhz# taskset -c 1 7z b -mmt1

Tot: 99 1094 1085

root@kvim2:~/src/mhz# taskset -c 5 7z b -mmt1

Tot: 99 819 812

root@kvim2:~/src/mhz# 7z b -mmt1

Tot: 99 818 811

root@kvim2:~/src/mhz# 7z b -mmt1

Tot: 99 819 812

As can be seen, the results of both ‘mhz’ and ‘7-zip’ are the same when forced to run on the small cores with taskset, and when the kernel schedules the task freely.

After your patch:

root@kvim2:~/mhz# ./mhz 1 100000

count=516515 us50=18383 us250=91477 diff=73094 cpu_MHz=1413.290

root@kvim2:~/mhz# ./mhz 1 100000

count=516515 us50=18296 us250=91448 diff=73152 cpu_MHz=1412.169

root@kvim2:~/mhz# ./mhz 1 100000

count=516515 us50=18289 us250=91441 diff=73152 cpu_MHz=1412.169

root@kvim2:~/mhz# 7z b -mmt1 ; 7z b -mmt1 ; 7z b -mmt1

Tot: 99 1072 1063

Tot: 99 1079 1070

Tot: 99 1076 1067

I cut out the 7-zip output, for cleanness sake.

@chewitt Thank you so much, man! It is time to take this piece of hardware out of the drawer where it has been laying for the last two years.

P.S: It would be nice to hear something from the Khadas staff about this issue too, just so they show that they care.

Good finding.

I will test this patch and get back to some feedback.

Thanks

Great job.

Now I may have to re-review this board.

It was disappointing for an octa-core. Now I might boinc the board